Five methods to increase governance over your data clients

Contents

Contents

The amount of data sources, clients, interfaces, and different stakeholders is overwhelming for the people who must serve it correctly and narrow the time needed to distill and process the data. I have made a list of the top five actions you can take today to increase quality, governance, and narrow the time your clients would need to extract the value of ingested data.

Define schemas.

A schema defines the structure of your data. The fields and types the other side expects to receive. Having well-defined schemas and a single source of truth that store all your different models is a must as you scale and more interfaces join the party and request to consume from different data sources.

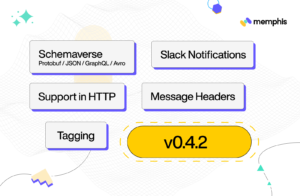

As schema changes will be requested, you would only need to perform the change once. (Have you got the chance to give schemaverse a spin?)

Enforce.

You have to govern, or you will lose control. If you made a data contract both theoretically or technically, you need to enforce it.

Ensure data will not reach clients in a way they didn’t ask for.

Dedicated identity per client.

As your organization grows, more and more “hands” will request to interact with the ingested data. It can be different clients, applications, and/or stakeholders.

Make sure each client receives a unique identity that can be traced and monitored, and in case some data-level / infra-level occurred, you would know who got affected, from which team, and the business impact instantly. It will help reach root-cause much faster than shooting slack messages and emails to the entire organization.

Auditing.

In a federated architecture and data pipelines, where multiple domains, teams, and clients are often intertwined, one configuration change can create a chain of reactions that ultimately will crash the entire process. Auditing is crucial to ensure you approach the upstream rather than the middle.

Notifications.

Last but not least, notifications. Don’t react when your staging environment or your tables/documents are already unaligned, or backends start to crash. Make sure you have notifications configured in every step of your pipeline. You get notified immediately if something goes wrong and data is not ingested as it should. Combining dedicated identity with auditing and a clear notification will enable you to sleep better. In case you awaken in the middle of the night, it will be for a short time and with all the information needed to fix it.