Journey to Event Driven – Part 2: Programming Models for the Event-Driven Architecture

Contents

Contents

What is Event-Driven Architecture?

Before delving into different programming models for Event-Driven Architecture, let’s first try to understand what exactly Event-Driven Architecture is.

Event-Driven Architecture is an architecture paradigm that uses events to communicate among decoupled services. It is a well-known architecture when dealing with applications built with microservices. But what do we mean by an event?

An event can be defined as a change or an update in the state. For instance, when a customer buys an item on an e-commerce website, the state of the item changes from “for sale” to “sold”. This change can, therefore, be treated as an event and should be propagated to other services within that architecture.

Nowadays, the term “event” denotes either the state or the event notification itself. For example, in the above example, the notification that the item has been shipped can also be treated as an event.

Good! Now that we have the definition right, let’s dive into its structure a little bit.

Key Components of Event-Driven Architecture

Event-Driven Architecture can be divided into 3 main components –

- Event Producer: An event producer senses a change and represents that change as an event.

- Event Router: The event is, then, published to the router. The event router filters and pushes the event to event consumers.

- Event Consumers: An event consumer has the responsibility to react to the event as soon as the event information reaches it. The reaction could be self-contained or send the information to another component within the architecture.

Great! Now we know how Event-Driven Architecture works. But how exactly can you benefit from it?

Benefits of Event-Driven Architecture

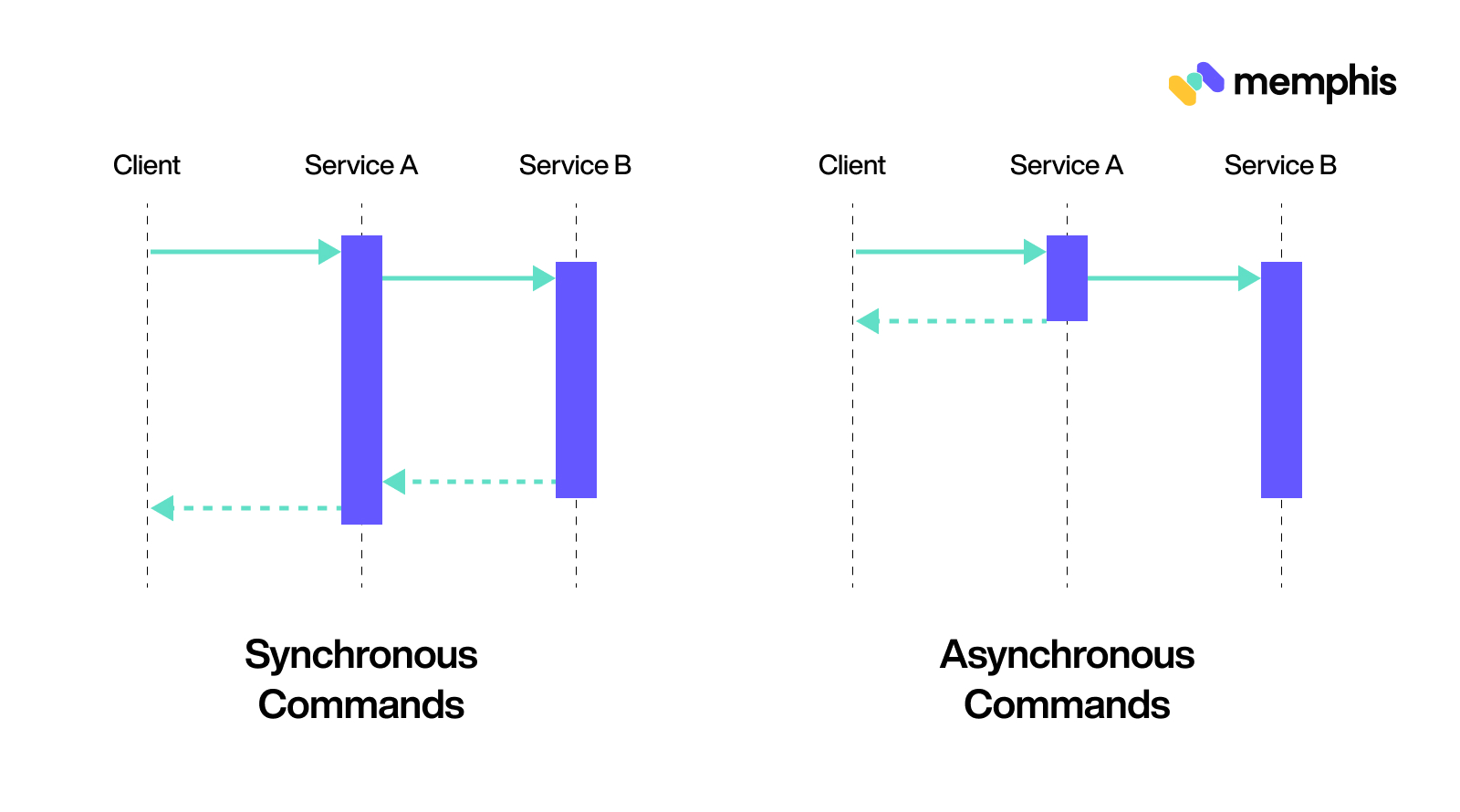

One of the biggest benefits Event-Driven Architecture offers is the ability to communicate asynchronously. But what is the difference between synchronous and asynchronous communication?

Synchronous communications are the ones that need scheduling and they operate on the request-response pattern. For example, a phone call is synchronous – you need the other person to pick up the call to communicate. In contrast, asynchronous communication can happen in its own time and does not require scheduling.

While building an application – if it is based on Request-Response Architecture, then the components will communicate via API calls. This means that a client will send a request and wait for the response before moving on to a new task. However, in Event-Driven Architecture, an event is generated and then the client can move on to its new task without waiting for a response. The Event Consumer reacts to the event as needed.

Because of its independent nature, Event-Driven Architecture can provide the following benefits:

1. Loose Coupling

Event-Driven Architecture promotes loose coupling of components. Applications can subscribe to an event that is independent of other events. This means that event publishers and subscribers can change without interfering with each other.

This also gives applications the ability to add or modify any features at a faster rate. One does not have to deal with technical inconsistencies because of other components.

2. Scaling

Event-Driven Architecture also gives the ability to scale up or down easily. This is possible because of loose coupling and independence between the functioning of events. This feature is particularly important when the traffic increases.

For instance, on an e-commerce website such as Amazon, there are festive discounts and on those occasions, the sale is bound to increase. As more customers place their orders, the load on the website increases. If the application is based on a Request-Response architecture, then it might lead to overloading as customers are placing their orders quicker than the warehouse can ship and respond. Thus, we need a system where these events can function independently, i.e. sending a confirmation email to a customer and sending the order information to the warehouse.

The ability to scale up and down easily also decreases the overall probability of system failure.

What else do we know about Event-Driven Architecture?

We know that Event-Driven Architecture is centered around events. This means we get to determine how to receive those events and what actions to take upon reception. This takes us to our next section where we talk about two main architectures – Reactive and Streaming Architecture.

And it turns out that both Reactive and Streaming Architecture work well with Event-Driven Architecture (EDA)!

Reactive Architecture

Reactive Architecture is a kind of architecture that uses EDA to develop elastic, responsive, and resilient systems.

But why do we need such an architecture for our applications?

Simply because of the benefits it offers. Businesses nowadays want to provide immediate responses and feedback to their end users. To do that developers rely on microservices. But applications built on microservices also need to provide support for overloading and failure. To resolve this issue, developers are including the Reactive Manifesto.

Now you may ask, what is the Reactive Manifesto?

The Reactive Manifesto

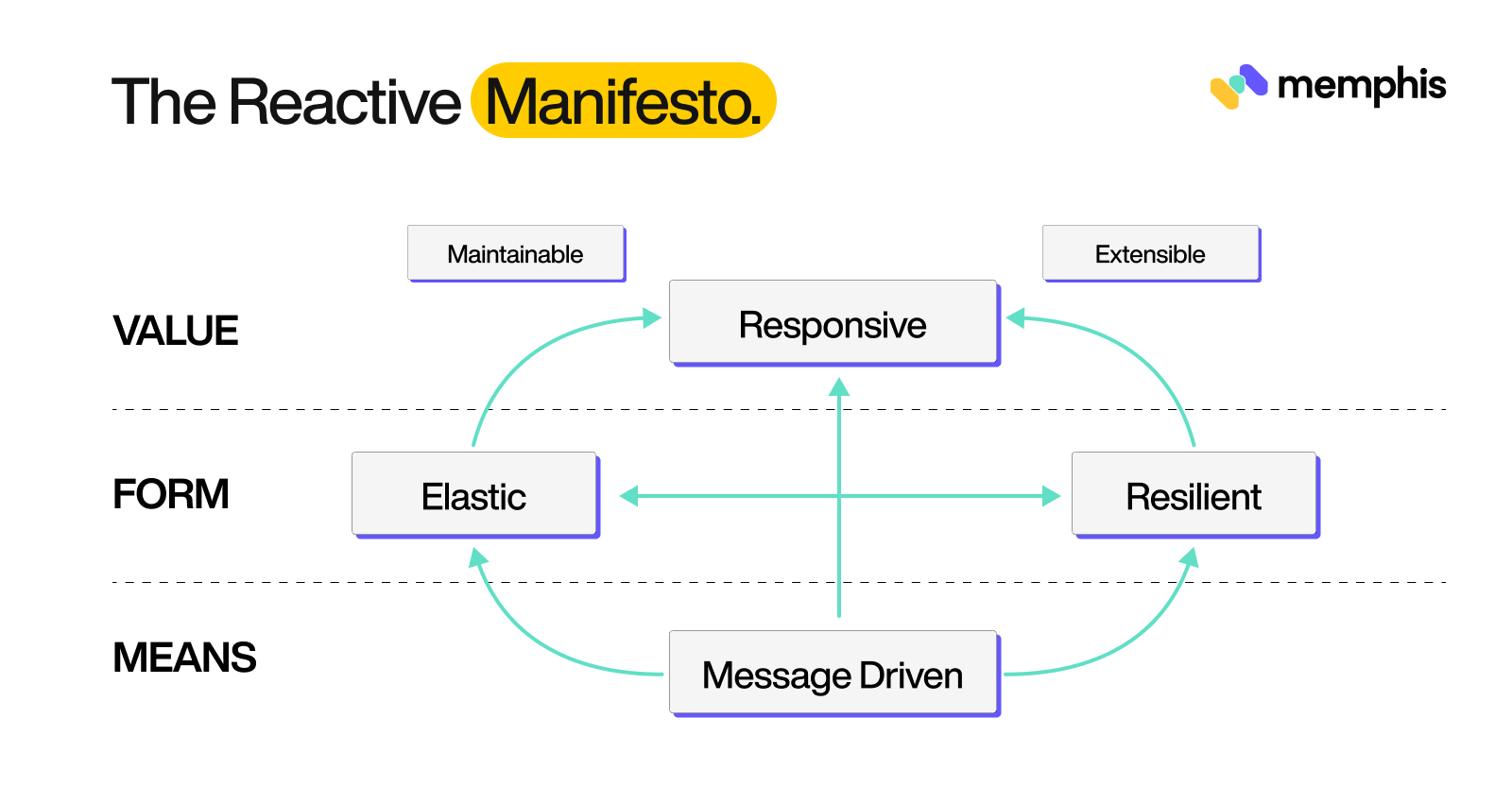

The Reactive Manifesto includes 4 aspects necessary for a coherent system architecture:

1. Responsive: A responsive system focuses on providing quick and consistent responses. This not only ensures good quality of service for the customer but also helps in detecting any system flaws promptly. Thus, a responsive system helps in better error handling and end-user experience.

2. Resilient: A resilient system should remain responsive in the face of failure as well. Resilience is accomplished by having a distributed system. This implies that failure should be contained within each component without compromising the whole system. According to the manifesto, a system can be made resilient by –

- Replication: Executing a component simultaneously on a different thread pool or network node. This ensures scalability.

- Containment: Containing the failure within each component so that the whole system does not get compromised.

- Isolation: Isolating the components from each other – i.e. loose coupling. It makes the system easier to extend, test, and evolve.

- Delegation: Delegation means the to hand over the execution of the task to a different component. The other component could be in a different thread or a network node. This enables the node which is delegating the task to do some other processing or take an action in case of a failure.

3. Elastic: Depending on the workload, the system should increase or decrease the allocation of resources such as memory storage, CPU cache, network bandwidth, etc. Having an elastic system means that there should not be any central bottlenecks or contention points. This will allow the system to share or duplicate components and distribute the load (input) among each other.

4. Message Driven: To enable loose coupling, reactive systems utilize asynchronous message passing. Such message-driven systems help to establish a boundary between components. This will further help with isolation and location transparency. Thus, this characteristic works closely with the other three aspects of the reactive system we talked about earlier. It is all interconnected. The figure below illustrates this relationship for a better understanding.

Benefits of Reactive Architecture

1. Effective use of resources

As each component is independent, the overall utilization of computer resources such as GPU, CPU, etc. increases. This is because one component does have to wait for another component to finish its process. Thus, multi-core systems can be utilized better. This also leads to better performance as the serialization of tasks is reduced.

2. Developer Productivity

Reactive Architecture not only benefits the user but also the developers. In a monolithic architecture, the time taken to deal with failure increases because the components are not isolated, and hence, the overall dependency increases. Reactive Architecture solves this problem by providing a way to decouple components. There is no need for synchronous communication anymore.

Moreover, it has introduced a comprehensive layout to design your application which saves the time of the people at the backend.

3. Overloading Management

The reactive manifesto also takes care of the over-utilization of resources by introducing back-pressure. It is a feedback mechanism when a component is unable to deal with the load. Because reactive architecture does not advocate dropping of messages uncontrollably, the component must communicate it to other components.

This is not only important to decrease the load on that component but also to reduce the chances of overall failure. Back-pressure also makes sure that the system remains resilient even when the load increases. Moreover, by providing the information that the component is not able to deal with the load, the system may itself try to reallocate resources to distribute the load – thus, promoting Elasticity.

Reactive Manifesto and Event-Driven Architecture (EDA)

After understanding the fundamentals of the Reactive Manifesto, it is not wrong to say that it resonates well with the Event-Driven microservice implementation. EDA supports the reactive manifesto. After all, to implement and develop Event-Driven microservices, one would use reactive libraries to take advantage of the non-blocking algorithms.

However, there is one significant aspect where the two differ – message-driven vs. Event-Driven. Reactive Manifesto advertises itself to be message-driven, unlike EDA which circles itself around events.

But what is the difference between a message-driven and Event-Driven Architecture?

Consider a scenario where component A has to convey some information to component B. In traditional models, this was achieved by component A invoking a method in component B. However, the problem with this model is that if component B is busy, A would have to wait. Thus, the two are coupled in time.

With Event-Driven Architecture, components announce the location where they would send such information (events). Therefore, in the above example, A would send out events to a well-known location without knowing the receiver component of such events. Component B can then subscribe to such kind of events to receive this information.

In contrast, the message-driven architecture allows component A to send the information directly to the address of Component B. In this case, component A does not have to wait for component B to respond or handle the process. It can get back to its own task after sending the information. Therefore, decoupling is possible in a message-driven architecture.

However, the comparison between the two may not be valid at times. Here’s why:

We know that it can be difficult to accomplish resilience and decoupling in an Event-Driven Architecture directly. However, if one pays close attention to the fundamentals, a message-driven architecture can serve as the building block of an Event-Driven system. Thus, we can build an Event-Driven system using the message-driven system as a tool.

We know that Reactive Architecture interconnects with EDA well. However, it is just one of the many architectural paradigms. It is surely helped modernize how we see applications today and has helped to deal with issues such as failure, overloading, coupling, isolation, etc.

But remember – like any other technology, it is just a tool. It can not be generalized for any or all use cases. This takes us to our next section.

Let us have a look at another well-known architecture we mentioned earlier in this article – Streaming Architecture.

Streaming Architecture

It’s hard to find businesses these days without an online presence. But with that online presence, come streams of data.

And how do you handle such an enormous amount of data?

The answer is event streaming. But what is event streaming and how is it related to streaming architecture?

To put it simply, event streaming is a way to help businesses analyze data corresponding to different events and respond to those events in real time. On the other hand, streaming architecture is the kind of architecture that allows to manage these massive data streams from a variety of sources. Thus, the two terms are often used interchangeably.

The next question that arises is – what can be achieved using all this data?

Benefits of Event Streaming

1. Real-Time Feedback and Insights

One of the biggest benefits event streaming offers is the ability to act on real-time data. For example, consider a scenario where an item on an e-commerce website goes “out of stock”. With real-time data, you get this information instantly. This would help your business restock the item and hence, decrease the risk of losing customers.

2. Using Data to Improve your Business

With the help of event streaming, you can process huge blocks of data. This data also includes information on some key things which can help improve your business. To illustrate this further, consider a scenario of airline A. A has information on time spent on its website, key dates, list of public holidays, etc. Using this data, A can alter its pricing to gain more profit or offer holiday discounts to attract more customers.

3. Better User Experience

Lastly, with access to real-time insights, you can create a more engaging customer experience. Consider the same scenario of owning an e-commerce website. By responding promptly to feedback such as “item out of stock” or “defected item”, you show that you care about the customer. This leads to an overall better end-user experience.

4. Scalability

With the help of event streaming, we can respond to thousands of requests in real-time instead of putting those in a queue. For example, during peak hours or on weekends, a ticket for a show might sell more. Rather than making the customers wait, we can create separate events which will be dealt with in real-time. This will decrease the overall wait time. Hence, event-streaming architecture promotes scalability.

Let us look at a real-world example where event streaming can prove to be useful.

Real-world Application of Event Streaming

Example 1: Online Payment

We have all encountered those times trying to reserve a favourite spot for a movie or book a ticket for that fascinating light and sound show. And our hope comes crashing down when the payment page gets stuck and then, we (sometimes) refresh the page only to see a failed transaction or worse! A transaction “in process”.

If you are lucky enough, then hopefully the amount is not debited just yet from your account. As for me, I often get that “your money will be refunded in 15 working days” message.

But why am I revisiting my financial struggles?

Because event streaming can help solve this problem to give us a better user experience (and bank balance). But how?

For each transaction, we can have separate events fulfilling only one part of the transaction. With the help of event streaming, it can be done concurrently without affecting the other event. Thus, resulting in a smoother experience with less number of transaction failures.

Taking the same example, one event can handle the payment request to update the total number of payments being processed at the moment. Another subsequent event can be responsible for validating the payment per user. A third event can then update the user’s balance after the payment is successful.

Example 2: Cybersecurity

With millions of users and a large pool of data online, also comes faulty transactions and digital fraud. Therefore, cybersecurity has become more important than ever.

We have all come across suspicious websites and anonymous people online trying to scam us by offering the “deal of a lifetime”.

Event streaming makes it possible to gather such data and detect whether there exists a correlation between these incidents. Here’s how –

- Collect information (events) from diverse sources, especially data sources which demand a live user environment

- Filter this data to get more information on malignant vs. benign attacks. This can help us with reducing false positives.

- Use the benefit of real-time analysis to correlate this information among different data sources.

- Forward priority information (events) to security management systems.

Now we know how event streaming can benefit our businesses, it is also important to focus on the architecture. Later on, this would help us compare the streaming architecture with EDA.

Key Components of Streaming Architecture

1. Message Brokers

The first step to make event streaming possible is to collect the data from the source and transform it into a format understood by other components. This is the job of message brokers (stream processors). After converting the source data into a standard message format, message brokers then stream the data continuously for other components to consume and act on.

Some of the widely known stream processing tools include Memphis, Apache Kafka, Microsoft Azure, etc.

2. Processing Tools

After receiving the data from the message broker or stream processors, we need to process this data before sending it for further analysis. Some famous processing tools used for this task are Apache Spark, Apache Storm, etc. Microsoft Azure Databricks supports Apache Spark and other processing tools as well.

3. Data Analytics

Now that our data is processed by the processing tools, it is passed for analysis. These analytics can then be used for creating interactive dashboards, failure alerts, or new event streams all together!

Of course, there is no single analytics tool for everyone. Every business has different needs and therefore, there are a plethora of tools available online.

Query engines such as Presto and Hive are commonly known for querying data stored in various data sources. Both Presto and Hive use Structured Query Language (SQL).

For searching text, Elasticsearch can be a great choice. It is a distributed analytics engine that helps in searching, storing, and managing data. Elasticsearch can be used for logs, metrics, application monitoring, etc.

In addition to Microsoft Azure, other cloud service providers such as Google Cloud, and Amazon Web Services (AWS) offer the deployment of these tools in one place.

4. Data Storage

Storing past data has become important for businesses because it helps them improve their user experience using Machine Learning and Data Analytics. But where do we store this past data?

Because of the rapid development of cloud services, data storage costs over the cloud are relatively low these days. This helps businesses store a large amount of data for a more extended period. However, data received from different sources may have different data types.

Then how do you store different kinds of data in one place?

The practical solution is to create a Data Lake. Data Lake is a centralized repository or system where you can store both structured and unstructured data in its original form (raw form) without having to worry about its preprocessing.

Services such as AWS, Google Cloud, etc. provide you with the facilities to create or host your data lake. It gives you the ability to import any kind of data in real-time.

Another solution for data storage is to have a Data Warehouse. Data Warehouse is a database optimized for analyzing data received from transactions or other applications.

But what is the difference between a Data Warehouse and a Data Lake?

Data Lake differs from a Data Warehouse in terms of defining the structure, relations, etc. While a Data Warehouse gives faster query results, it also comes at a higher cost compared to a Data Late. Having a Data Lake is, therefore, a cheap, and flexible option. Depending on your business needs, you may need both.

Let us now move on to understand the difference between Streaming Architecture and Event-Driven Architecture.

Streaming Architecture and Event-Driven Architecture

As the name suggests, both event streaming and Event-Driven Architecture are centered around events. The difference between the two is how the events are received.

As we saw before, in Event-Driven Architecture, events are published to communicate to other applications or decoupled services.

On the other hand, event streaming tools such as Memphis produces streams of data (event) which are then processed by the message brokers (or stream processors). These event streams can be accessed via these platforms both in real-time and later on for building metrics, dashboards, etc.

Fortunately, you do not have to choose one over the other. Much like reactive architecture, event streaming can also work in conjunction with Event-Driven Architecture. For instance, the communication among services provided by the Event-Driven Architecture can be combined with real-time event processing by event streaming.

This will allow your business to give rapid responses. Moreover, with all the data available in real-time and (later) stored in a data lake, you can enhance the user experience using Machine Learning.

In conclusion, Event-Driven Architecture can be combined with both event streaming and reactive architecture to achieve better results. But how?

Surely, we have mentioned that EDA can be combined with applications running on microservices. But we never discussed how these two work together.

How is Event-Driven Architecture related to Microservices?

Microservices are a collection of small, loosely-coupled services that communicate with each other to run an application. They have the same benefits as the reactive architecture because of their ability to provide isolation.

Since services are independent, it does not bring the whole system down in case of failure, unlike the traditional monolithic architecture. Moreover, services can run in a container that includes the code, libraries, and other dependencies. This makes them highly scalable.

Because of the same reason, they can also have different codes with different dependencies. This gives them the ability to be deployed to diverse coding environments.

Containerized nature of the application gives room for more isolation and independence to get tested and deployed individually. This means that a change in one microservice does not trigger a change in all microservices.

But how does it all come together? How does a microservice know when to perform the desired action when all of it is so isolated?

We need some mode of communication among these microservices. The classic way of communication is to wait for an action from the user which means it requires human intervention. But it does not align with microservices’ motto of being independent and isolated.

That’s where Event-Driven Architecture (EDA) comes into play!

The basic idea is to detect a change in state (event) automatically and let the system respond to it appropriately. That is exactly what EDA does.

Any significant change is detected and converted to an event by the Event Producer. The event is, then, sent to the Event Router which filters and pushes it to Event Consumers. Lastly, the Event Consumer reacts to the event aptly.

Thus, EDA makes the process of communication among microservices fluid.

In this section, we saw that EDA and microservices work well. But does your business need to operate on microservices in the first place?

If yes, then we need to talk about the time cost, and effort it requires to maintain such architecture. Is there anything we’re missing out on?

Do you need Microservices?

Microservices are surely a great way to provide scalability and agility to your business. But it is more important to know when to use microservices and when not to use microservices.

Factors to consider:

If you are looking to scale your business and want your application to be able to run across diverse platforms, containerized applications are the way to go. On the contrary, if you own a local business such as a restaurant that delivers to selected locations within a city, you do not need a highly sophisticated application built on microservices.

One should have a really good reason to introduce microservices into their business. This is because the implementation can be costly in terms of both time and money. For instance, it does not make sense for the restaurant owner (in the example above) to buy cloud services for its online website but it might make sense for a tech start-up to do so.

Another factor to consider is if the money spent on resources to implement microservices will be worth it in the future. One needs to ask – “Is enough scope for a profit if they do actually manage to scale up the business at an unprecedented rate?”. Continuing with the same example, if the restaurant owner can deliver to only selected locations within one city, then scaling up would cost him/her more, and the profit earned from the new application would not be able to justify the resources spent.

Additionally, most applications switch to microservices rather than beginning from microservices. If you already have a working monolithic application, then there is a chance that microservices do not coincide with the existing implementation. Not every microservice is the same and hence, it can lead to inconsistencies in the beginning.

Lastly, the Service Decomposition Paradox. As microservices become smaller, their functionality becomes singular. This makes them more reusable. But it also means that the number of microservices required to accomplish a task becomes larger. Since services need to communicate with each other, there is some network latency involved. And as the number of services increases, so does the latency.

Hence, the paradox – do you want your microservices to be specific and reusable, or do you want to minimize latency? This trade-off can lead to instability which affects the overall user experience.

So, to summarize:

What do Microservices offer?

- Scalability

- Isolation

- Independence to deploy your application across different platforms

- Failure Management (the system will not collapse)

Potential Challenges

- Time-consuming

- Additional costs and resources required

- Decomposition Paradox

- Difficult to implement at first

After taking into consideration the benefits and potential challenges, if you decide to introduce microservices to your business, then here’s a tip for you!

Don’t do it all at once.

If you are trying microservices out, then pick up one key thing you would like to accomplish in your application using microservices. After that little modification, ask yourself – is the distributed system better than your monolith application? Was it worth the time and resources?

If the answer to those questions is yes, then you can slowly try to build a distributed system based on microservices. This approach is better than spending six months trying to build a whole new distributed system and then seeing how it works. It’s cost and time efficient.

Memphis can help you here. It is an open-source real-time data processing tool that gives you end-to-end support for in-app streaming, asynchronous communication between services, and much more!

Now, let’s talk about some real-world applications of things we have talked about so far.

Real-world application of Event-Driven Architecture

Consider a scenario of an e-commerce website such as Amazon. From adding an item to the cart to placing an order, there are multiple tasks to be done. Of course, you do not want your application to wait for one task to complete in order to initiate the other one.

These tasks can be completed asynchronously with the help of Event-Driven Architecture.

Example 1: Calling other Services

Let us consider when placing an order, there are 3 things to be done –

- Sending an email confirming the order

- Sending an SMS

- Calling a third-party application for further actions

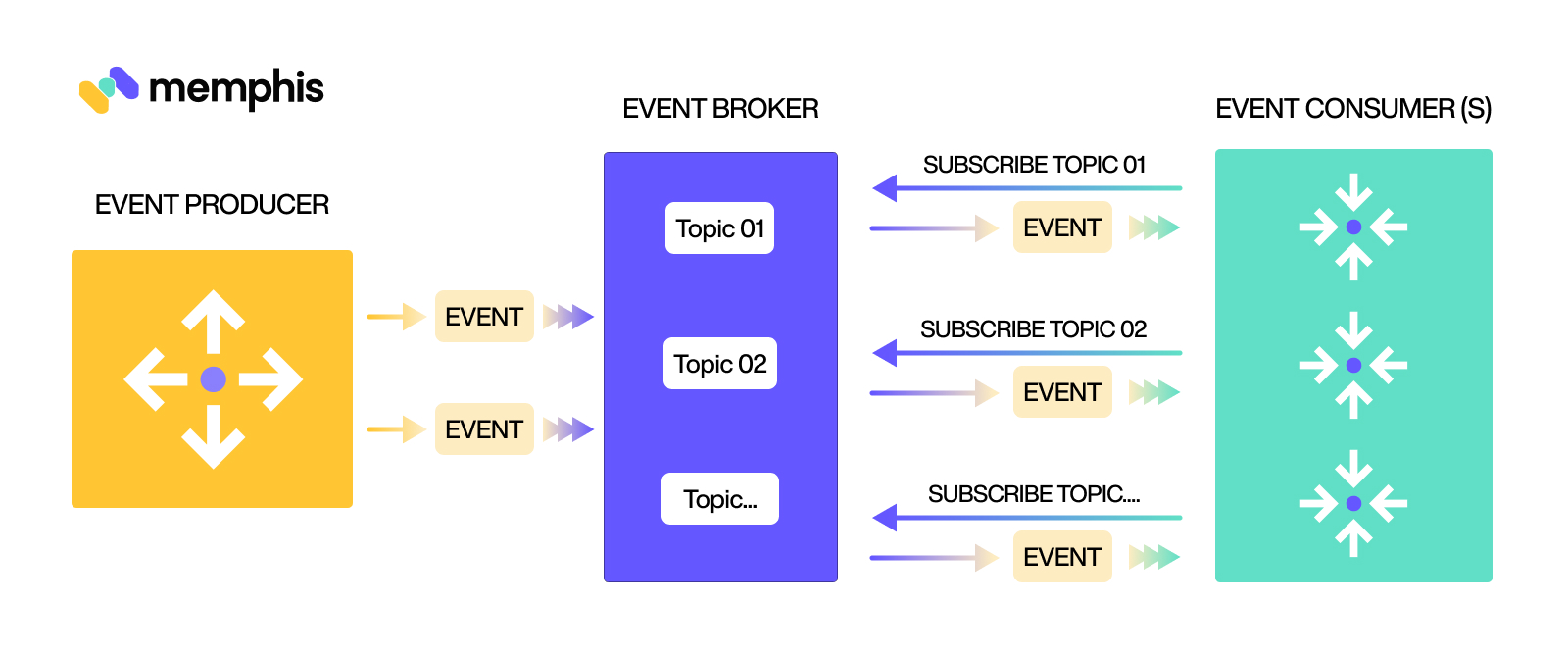

This can be achieved by creating 3 separate tasks. The figure below illustrates the Event-Driven Architecture.

The Event Producer first senses a change – “item in cart” to “order placed”. This state change is then represented as an event. The event will be sent to the Event Broker which will act as a middleman. The Event Broker will then send then three individual requests to three different Event Consumers.

For example, the first consumer will take care of the email confirmation, the second will be responsible for sending an SMS, whereas the third one is responsible for calling other APIs regarding further action (such as informing the warehouse, etc.).

The advantage of Event-Driven Architecture in such a scenario is that these 3 tasks can be accomplished concurrently but in complete isolation. Moreover, if one consumer is taking more time – for instance, the API call is taking time to respond, then it does not hinder the other two tasks.

What if we want to add more functionality? Such as sending the information to the vendor the moment an order is placed. Then, we can just add one more consumer in the last layer of the architecture.

Example 2: Changing the Data

As we saw in Streaming Architecture as well, it’s important to keep track of current and past data so that you can utilize it later. Taking the same example of an e-commerce website, we need to store information such as user data, order history, etc. Let’s assume there are 3 data storage options available for this website –

- Cache: for storing the recent information

- Data Lake: for storing the data in its raw form to utilize it later

- Data Warehouse: for storing the data in a relational form

Now, after the order has been placed, we need to update these 3 data sources with the latest information. To do that, we can create an event containing the modified data.

The same process happens again – The Event Producer publishes the data which is eventually picked up by 3 different Event Consumers.

Now, each Event Consumer may update the data in a form that is suitable for its data sources. All of this can happen independently and concurrently with the help of Event-Driven Architecture.

Conclusion

Event-Driven Architecture is changing how we design our applications now. And for the better!

The evolution from Monolithic to Distributed programming is enormous. While Microservices and Event-Driven Architecture may seem overwhelming, they are indeed revolutionary in today’s age of Cloud and Big Data.

Event-Driven Architecture is also versatile. It works well with Microservices, Reactive Architecture, as well as Streaming Architecture.

In this article, we covered these architectures in much detail and saw how everything finally comes together to build a cohesive and independent system.