RabbitMQ Durable Queue vs Memphis.dev Station

Contents

Contents

RabbitMQ is a message queue. A message queue (or a message broker) is a technology that handles data transfer across services of your microservice architecture. A message here is a piece of data or a packet that one service sends over to the other.

RabbitMQ is an open-source message broker that is simple to use. It has been around since 2007. RabbitMQ was built with Erlang. It has available client SDKs in major programming languages. RabbitMQ is scalable. You can deploy it in the cloud or on your servers.

As a message queue, RabbitMQ permits you to produce and consume messages. Your producers and consumers can reside anywhere. They only need access to your RabbitMQ instance.

RabbitMQ also permits a Pub/Sub model. In this model, your producers can publish messages to a topic. In turn, consumers can subscribe to this topic and consume messages when there are available.

To install RabbitMQ, use Docker. The following Docker command will get RabbitMQ running on your system:

| docker run -it –rm –name rabbitmq -p 5672:5672 -p 15672:15672 rabbitmq:3.11-management |

This docker command will pull (if not already pulled) the required images it needs to launch RabbitMQ. After that, it will start up the RabbitMQ instance.

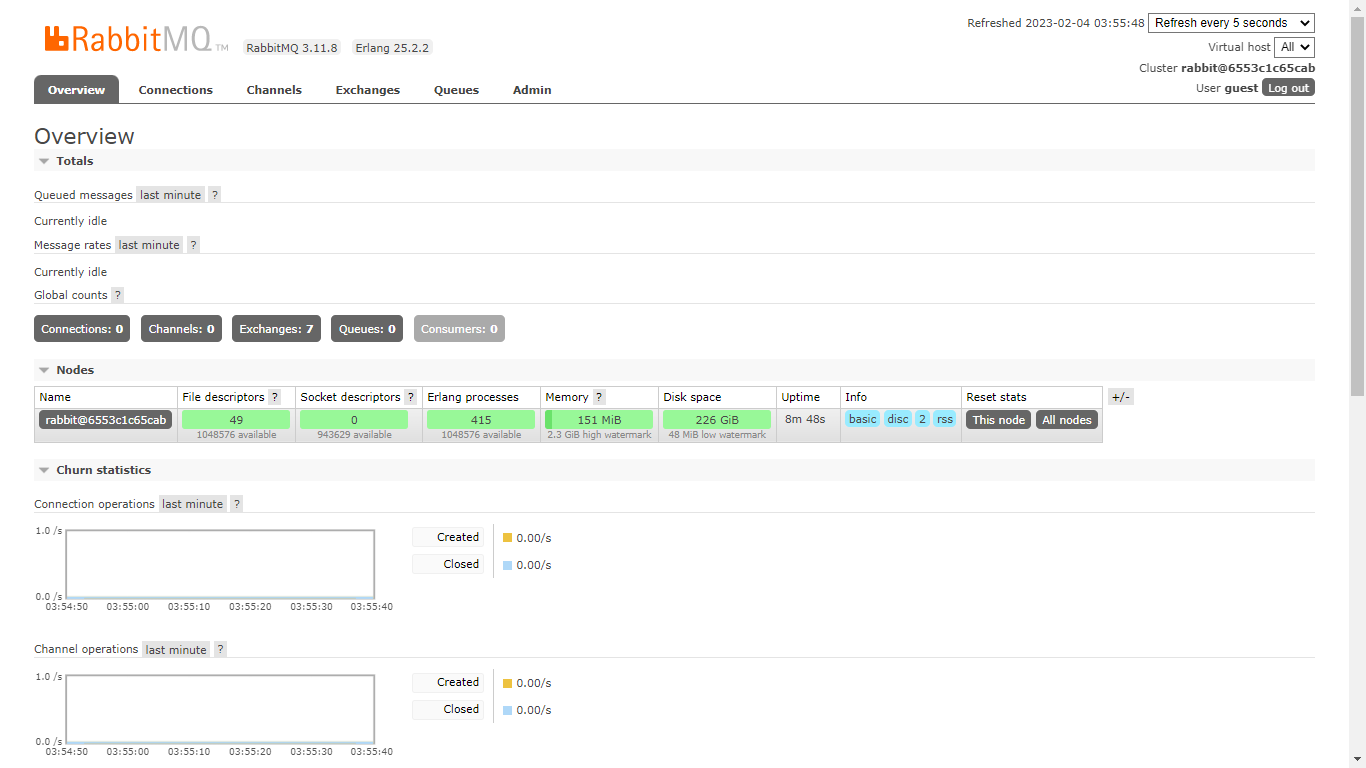

Go to localhost:15672 in your browser. Log in with “guest” as both username and password (these are the default credentials). It will then show you the basic RabbitMQ dashboard.

RabbitMQ uses a protocol known as Advanced Messaging Queueing Protocol (AMQP). Like every protocol, AMQP defines entities it uses and rules of how its works. In RabbitMQ, there are three AMQP entities. They are bindings, exchanges, and queues.

This article will be looking at a special type of queue (durable queues). But then, just before that …

What is a Queue in RabbitMQ

In the world of data structures, a queue is some list from which stuff can only enter from its bottom and leave from its top. A queue contains a serial arrangement of data. Data in a queue can be enqueued (added at the bottom) or dequeued (removed at the top). In other words, queues observe the “First In First Out (FIFO)” rule.

In RabbitMQ, a queue is a placeholder for messages before they get to consumers. RabbitMQ uses queues to hold messages as it gradually dispatches each message to its consumer. Essentially, a queue is where you publish (or produce) a message.

All RabbitMQ queues have a name. When creating a queue, you can specify a name of your choice. Usually, you will specify a name relating to the kind of messages you will send to that queue.

If in case you are not worried about the name of your queue, you can leave RabbitMQ to do the name-setting process by itself. This will just take place internally. Note that RabbitMQ doesn’t permit you to start any queue’s name with “amq.” The reason is “amq.” is a reserved prefix for special internal queues.

Asides from its name, a queue can have other properties that define its behavior. You can set these properties (alongside the name) when creating or declaring that queue. Such queue properties include:

- Auto-delete,

- Exclusivity, and

- Durability.

An auto-delete queue, as its name implies, will automatically get deleted from RabbitMQ after its last consumer unsubscribes. An exclusive queue likewise also gets deleted when the connection with its subscriber is gone. However, exclusive queues permit only one subscriber (consumer). Durable queues are our focus in this article. So,

What are Durable Queues in RabbitMQ

Durable queues are special. They come back to life after a restart of the running RabbitMQ instance. They don’t crash alongside a crash of your servers or of RabbitMQ itself. They are persistent. Through them, RabbitMQ ensures high data fidelity.

Unlike durable queues, other queue types disappear on rebooting a RabbitMQ instance. They don’t survive instance restart by design. This is where durable queues come in. There are special use cases (as we will see below) where durable queues are indispensable.

About the Durability of Queues in RabbitMQ

RabbitMQ achieves queue durability through the following mechanisms:

1. Storage (Persistence)

This is where RabbitMQ stores the messages that pass through the broker. By default, messages are held in memory. In other words, they are stored in the computer’s real-time processing space. That is, where the computer has been allocated to RabbitMQ.

Given that the running RabbitMQ instance can shut down at any time (maybe because of a server crash), messages stored in memory don’t have high fidelity. We lose them forever when such crashes occur. That’s why with durable queues, we have to make messages to be persistent.

Persistence refers to writing messages to the disk first as they enter the message broker. This way, we are sure a copy of them exists. RabbitMQ (the message broker) will then send the messages to the appropriate consumer from disk instead of from memory. Such retention ensures that durable queues deliver the messages and that the messages are not lost.

Durability on queues doesn’t mean that queued messages will be recovered when the server restarts. Only the queue itself comes back to life. Remember to make persistent messages that will pass through durable queues. If a message is not persistent, it will be lost after a server restart.

2. Durable Exchanges

In RabbitMQ, an exchange is the entity to which we publish messages. Exchanges have their own types and configuration. Irrespective of its setting, an exchange routes receive messages to the appropriate queue(s). In turn, the queue sends messages to its consumers.

Exchanges can be durable. Like durable queues, durable exchanges survive restarts of the RabbitMQ instance. This makes sense because both the exchange and the queue have to always be available to handle persistent messages.

Exchanges are durable by default. You still have the option of specifying durable as true when creating an exchange. Together with durable exchanges and persistent messages, durable queues in RabbitMQ are able to reach their full potential.

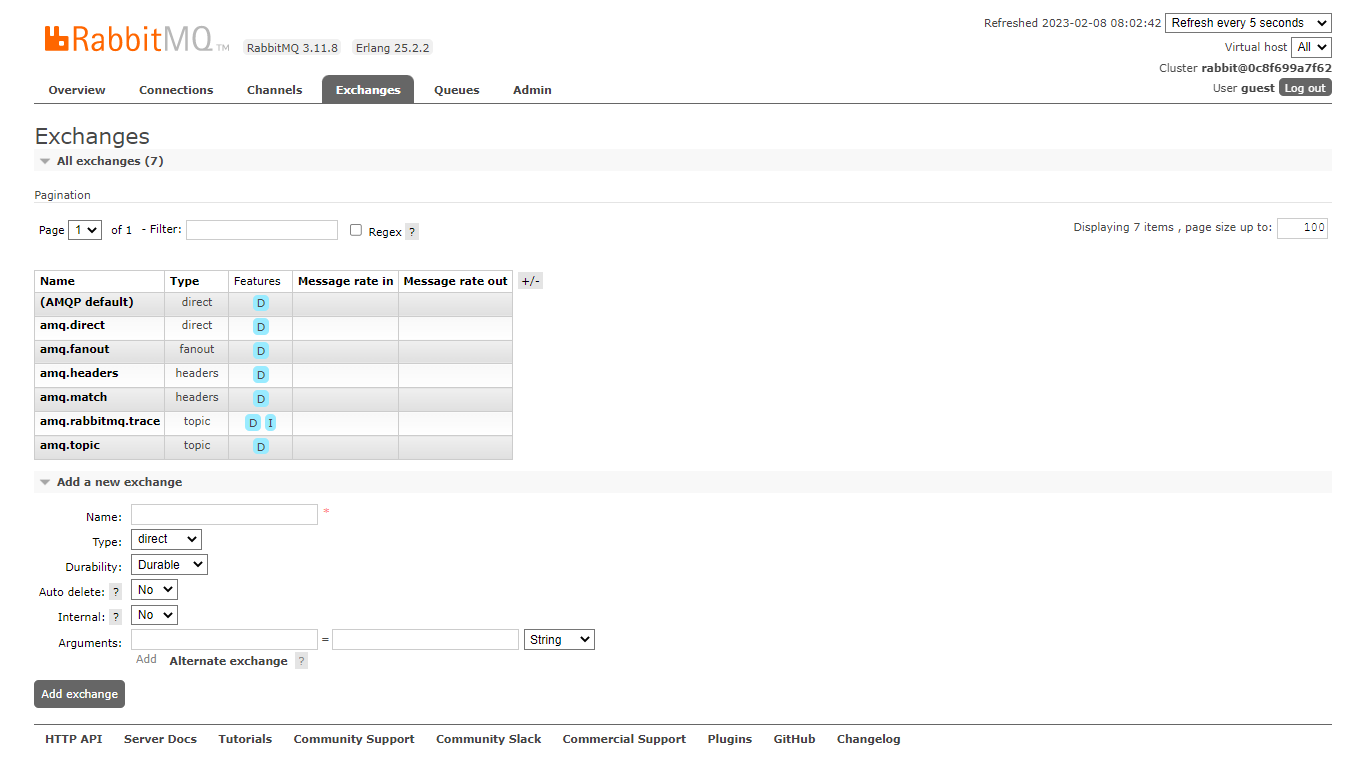

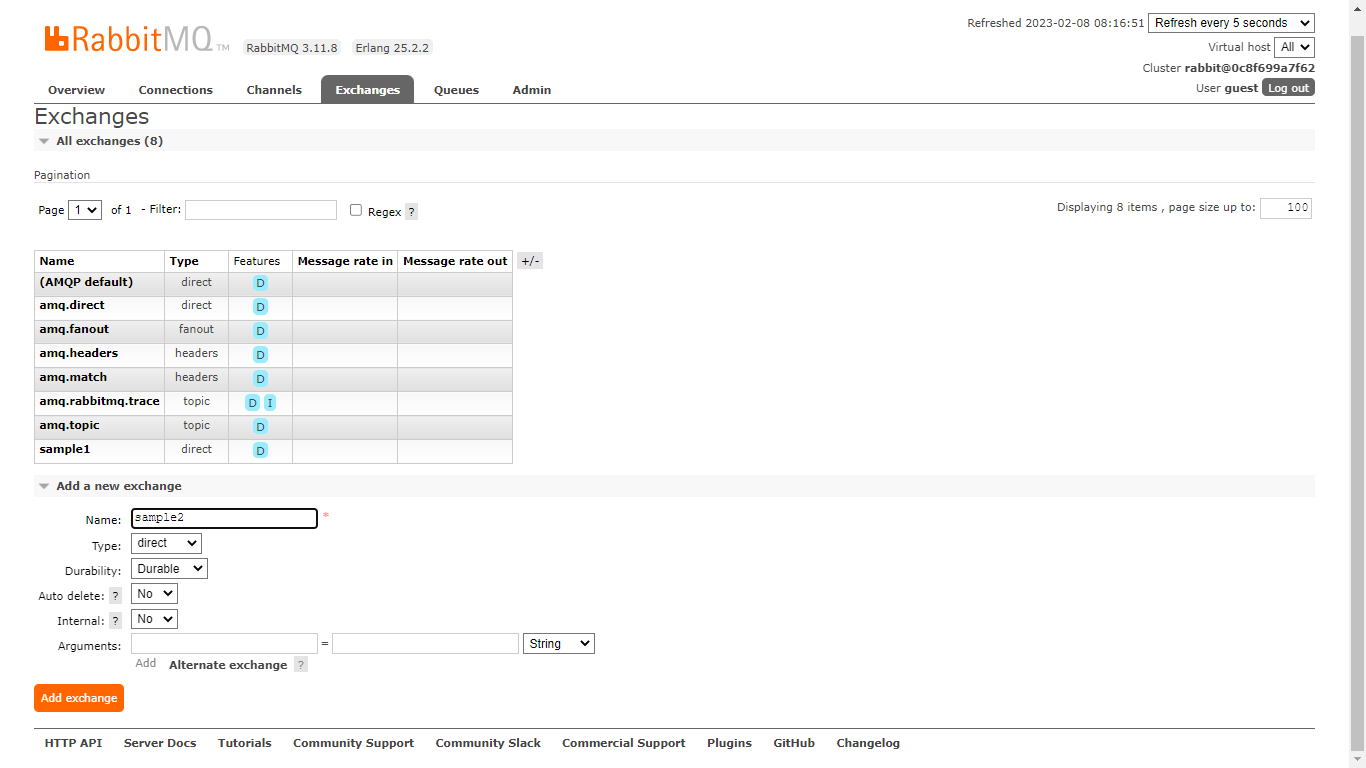

Durable Exchanges and Queues have a “D” in the RabbitMQ dashboard (under features). When you create a new RabbitMQ instance for the first time, RabbitMQ will auto-create some default exchanges for you. As you will expect, these exchanges are durable.

3. Replicas/High Availability

RabbitMQ can run with multiple interconnected nodes. These nodes work together as a cluster. A RabbitMQ cluster is a logical group of nodes that each keep the distributed state of the broker.

When RabbitMQ starts, it runs as a single node. We can then attach more nodes/servers and detach them anytime. That is, each node is a replica of the other.

Clusters are like a buffer system. It is common practice to set up a cluster for your RabbitMQ deployment. As such, your RabbitMQ will resist damage to a device hosting a particular node. This is what we want. That is, our durable queues will always be available at all times, a consequence of nodes in a cluster.

Use cases for durable queues

Durable queues are the go-to way for some critical applications or scenarios. Let’s examine 5 examples below:

- Logging

- Financial Transactions

- Queueing Tasks

- Chat Systems

- E-commerce applications

Logging

Today, logs are important in all systems. Data Engineers, Developers, Dev-Ops, Site-Reliability, and System Admins all use logs to monitor what’s happening inside a given system.

Because of the complexity of some systems, a different microservice usually handles logs. This service communicates with others to receive logs. It also stores the logs as appropriate or emits them to any setups for monitoring.

At any point in time, we don’t want to lose any vital logs of the system. We want to keep track of each to the detail to really understand what’s going on within our data systems.

Durable queues solve this problem. Our log queues should be durable. As such, while producing to or consuming from the queue, we are confident that we won’t lose inter-service messages.

Financial Transactions

The use of technology to handle financial transactions keeps growing by the day. The subject of finance is a delicate case. Nobody wants the slightest mistakes with money in digital processes.

Take a bank for example. Bank staff and managers record financial transactions for customers across different documents. In foreign exchange platforms, brokers are constantly swapping money across currencies. Insurance firms also have to handle financial transactions. These transactions have to be handled properly.

Irrespective of how money circulates in your architecture, there is always a need for communicating across the concerned services of the system. Chances are you’ve settled for a message broker to smoothen the inter-service communication.

Use durable queues for sending and receiving important transactions in the financial system. Use durable queues to entirely avoid losses of records within financial systems. Use them to double-confirm the same transaction before notifying users.

Queueing Tasks

Let’s say you want to build a system similar to YouTube. At the point of uploading a video, you don’t want to block the UI because of the video upload process (the process is time-taking). So you defer the handling of the entire video upload-and-publish process to a message broker.

The process of video transfer is done in steps:

- Start uploading. The upload continues in the process. Allow the user to fill in other video details in the UI.

- When the upload is complete, within your backend, process the video into various pixel qualities (and maybe audio too).

- When the pixel-quality processing is complete, check the video’s audio for any copy-righted music.

- When any copyright issues have been settled, generate subtitles (if need be) for the video.

- When the entire process is complete, notify channel subscribers that a new video is out.

There could be more but the above is just an example. As your app grows, each task above becomes complex. Let’s say that a separate microservice handles each task. You have to queue these tasks through the message broker. As such, when a service is free, it can start processing the next task.

Queueing tasks this way is a good use case for durable queues. The reason is to create a solid and resilient system. A system where every task gets executed (given that a message broker is handling communication).

Your servers could experience so much traffic. Developers could update the production code in real time with bugs. Your cloud provider could have issues charging your credit card and then stop your virtual machines.

Those are unforeseen scenarios that you can prepare against by using durable queues. Use durable queues to queue tasks when you don’t want to miss out on any of those tasks.

There are some cases where you might not find a durable queue useful. And that’s okay. For example, a durable queue could be an overkill for developing a prototype of a system you are still designing.

Chat systems

This is an implicit example. When a user sends a chat message, modifies an account setting, or blocks another user, you want your system to quickly propagate the change just made. You also don’t want to lose any of such changes. That could be disastrous.

Durable queues can prevent such scenarios. With durable queues, you are sure that each microservice will appropriately respond to any change within the system. For example, you are sure that all members of a chat group will receive a chat message at all times.

E-commerce applications

E-commerce apps are another complex systems whose designs we can improve with message brokers. You can use a broker to handle purchases. You want to notify stock/inventory for removed assets. You want to handle in and outflow of money. You want to move the goods for shipping.

Each step in the flow of purchases in an e-commerce app is crucial. None of it should fall out. Because a skipped one means that that particular purchase won’t complete. It gives a bad customer experience and adds to the headache of the customer support personnel.

Use durable queues across communications in e-commerce applications. As producers and consumers interact with the durable queues, each task in the purchase flow will be handled appropriately.

How to set up a Durable Queue in RabbitMQ

You can set up a durable queue through the dashboard UI of RabbitMQ, through the CLI, or through the code that interacts with the broker.

Before we dive in, remember that you need a durable exchange to bind to the queue you want to create. This step is necessary so that messages actually get to the durable queue of choice.

Dashboard

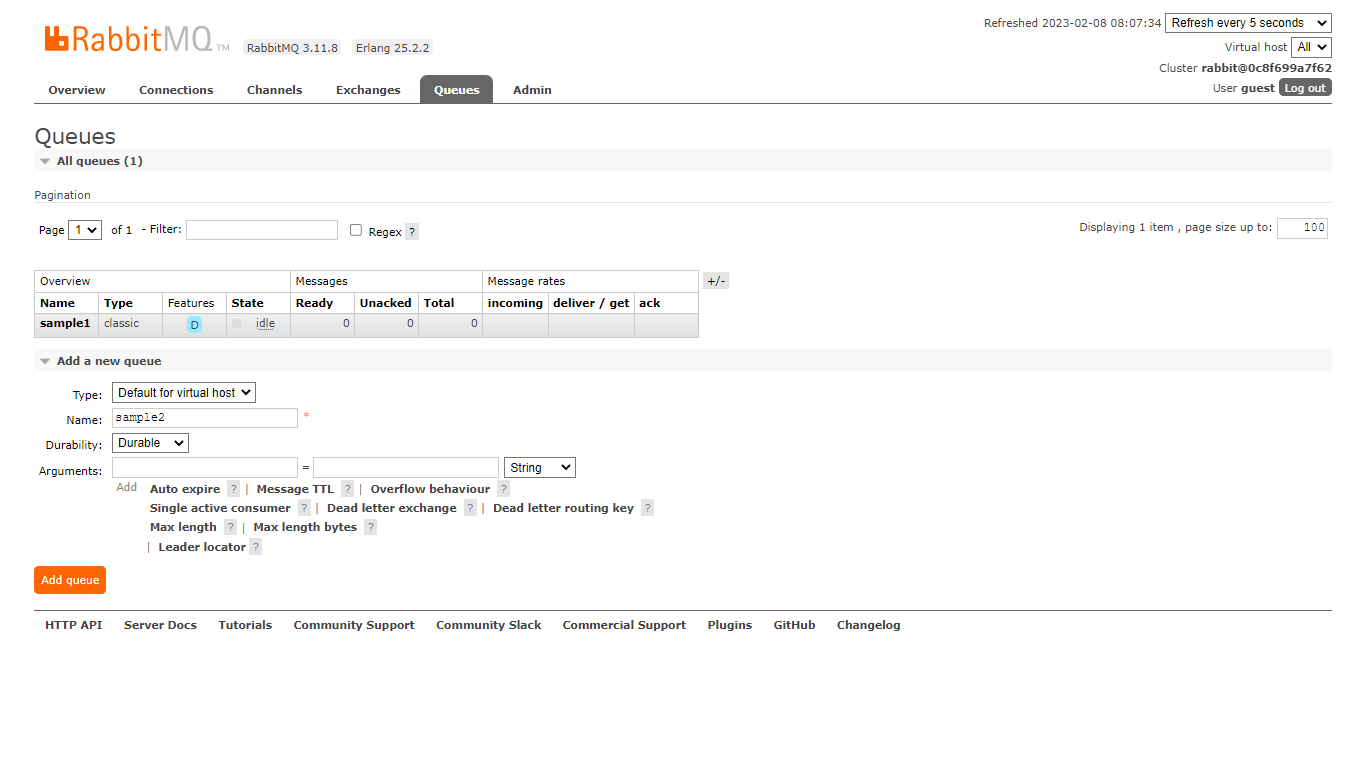

In the RabbitMQ dashboard, go to the Queues tab. At a basic level, enter a name for the queue and select “durable” under durability. Click on “Add Queue”. This will create a durable queue and show it in the list of queues.

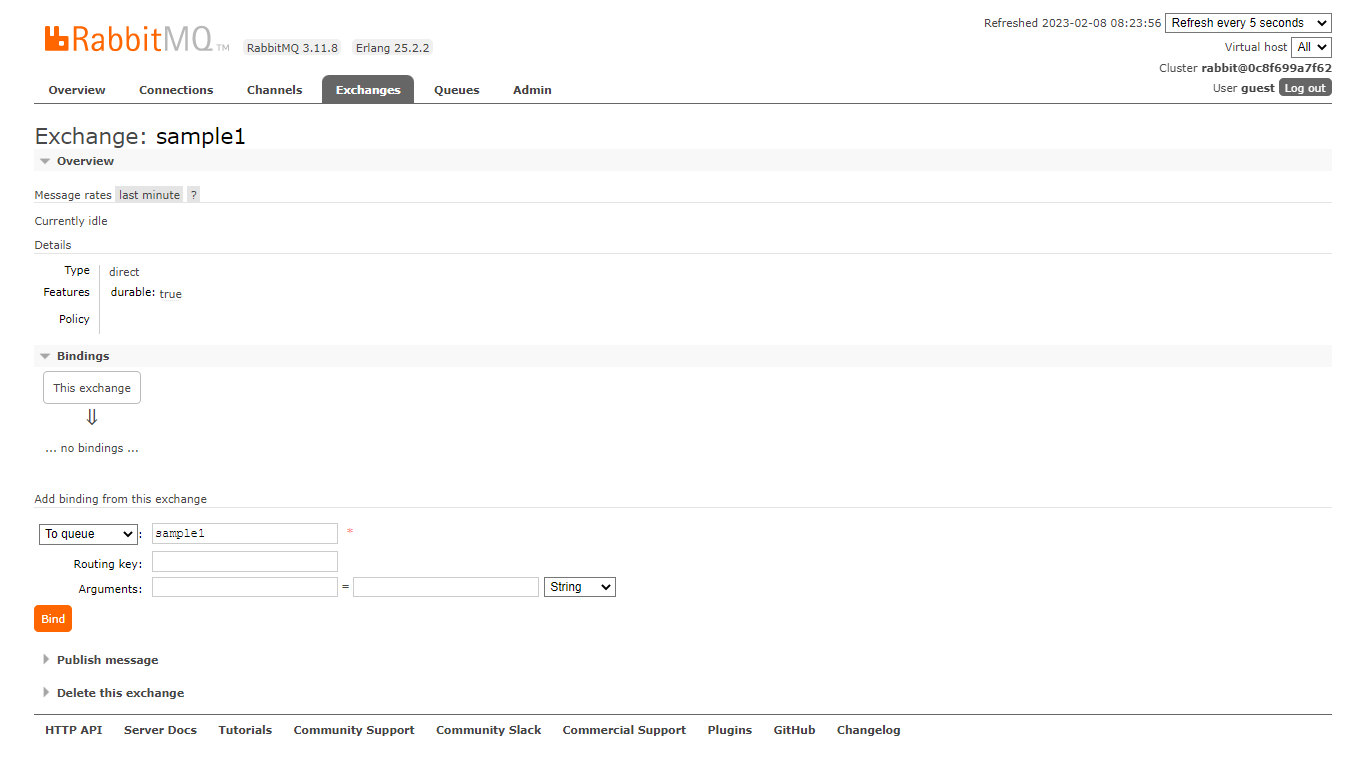

Now go to the Exchanges tab, and also create an exchange. Enter the name of the exchange and ensure that the “durable” property is also set. Click on “Add Exchange”. The newly created exchange will now show in the list of Exchanges in the dashboard.

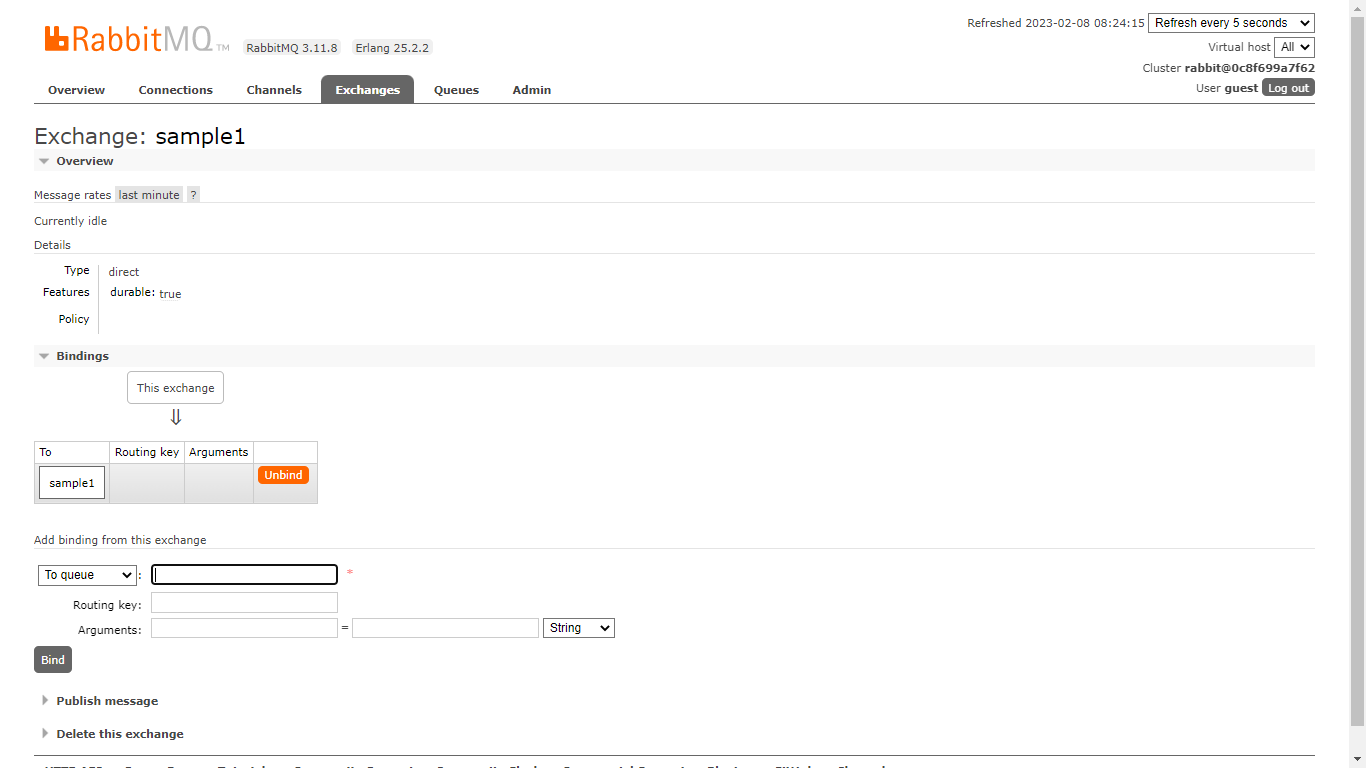

The last step is to bind the durable exchange and the durable queue. This binding is important because it will permit persistent messages to get to the durable queue from the durable exchange. To create a binding, click on the exchange to enter its detail page. Enter the queue’s name in the bindings section. Click on “Bind”.

After it is bound, the queue will now be listed under the bindings section. You can unbind it if the need arises.

Let’s test this durable queue. Let’s test if a published message will still be available after restarting the RabbitMQ instance.

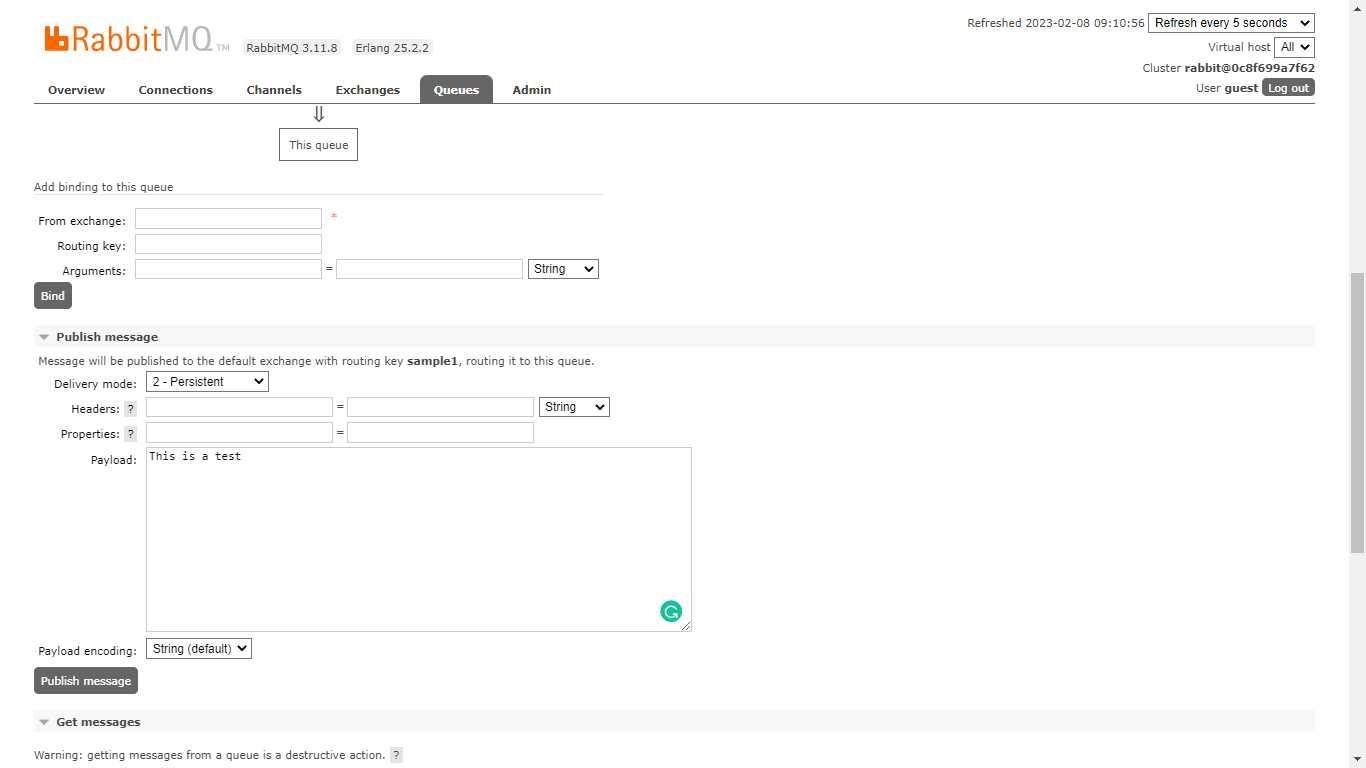

We will publish a message to the queue. Go back to the Queues tab. Click on the queue you created to enter its detail page. Scroll down to “Publish message”, enter a message, set its persistence as “non-persistent”, then publish it.

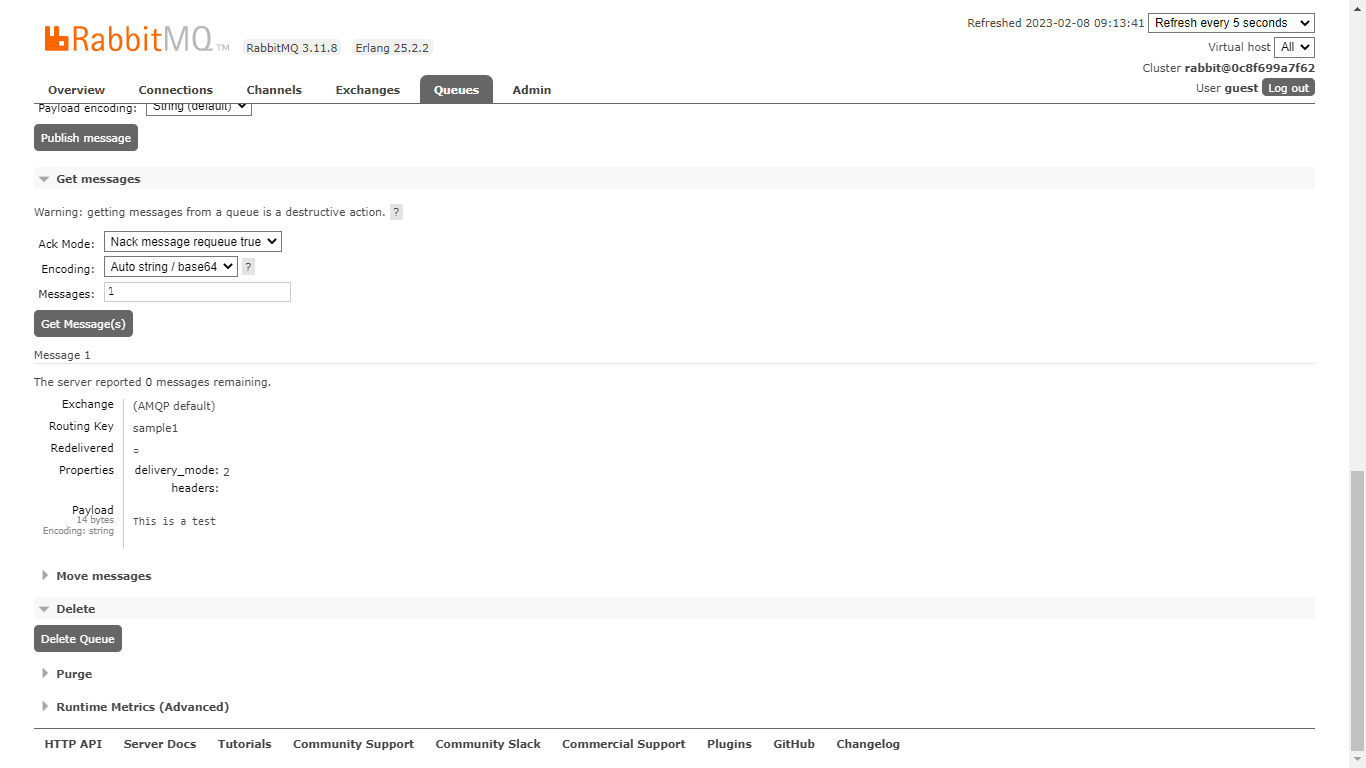

Scroll down to “Get Message” and click on the “Get Messages” button. The message will come up. Restart the RabbitMQ instance in Docker. Go back to the dashboard and notice that the queue is still there (it is durable). Go to “Get Messages” and click the button once more, you won’t see the message.

Repeat the same steps but this time, publish a message that is “persistent”. After restarting RabbitMQ, the message will still appear in the “Get Messages” section (after clicking its button).

CLI

RabbitMQ comes with a CLI alongside the dashboard. This CLI is known as “rabbitmqadmin”. It can do most of the things one can do with the RabbitMQ UI. Let’s use the CLI to set up and test a durable queue.

First, download the CLI. Then, go to:

| http://localhost:15672/cli/rabbitmqadmin |

and “rabbitmqadmin” will be auto-downloaded. It works with Python.

If you are using Windows, have Python installed and added to the path. Prefix the following commands with “python” for rabbitmqadmin to work. If you are in a Unix-like system, copy the downloaded rabbitmqadmin file to a directory that is part of the path variable.

Run the following command to create the exchange:

| rabbitmqadmin declare exchange name=sample2 durable=true type=direct |

If the exchange exists already, the supplied flags will be applied to it. The durable=true flag is optional. It is optional because exchanges are durable by default. Also, we want durable exchanges in this case. type is mandatory and direct is the most basic. You can supply a name of your choice (instead of sample2). The command should run successfully and respond with “exchange declared”.

Now it is time to create the queue. Run the following command:

| rabbitmqadmin declare queue name=sample2 durable=true |

As usual, you can set the name to anything you like. The command should run successfully and respond with “queue declared”.

Now, bind the queue to the exchange. Run the following command. It should run and respond with “binding declared”.

| rabbitmqadmin declare binding source=“sample2” destination_type=“queue” destination=“sample2” |

And there is it, you have a durable queue bound to a durable exchange, both created from the RabbitMQ CLI. If you refresh the RabbitMQ dashboard, you should see your newly created durable queue and exchange.

You can test its durability there in the UI. You can also use the CLI to execute this test. Let’s use the CLI to publish a message to the queue. Run the following command:

| rabbitmqadmin publish exchange=sample2 routing_key= payload=“Hello, World!” properties=”{\”delivery_mode\”:2}” |

Notice that we specified the exchange (and not the queue) to publish to. Also, we used the routing_key flag but didn’t give it a value, routing_key is compulsory. We are not passing a value because we have bound this exchange to a queue and also because we didn’t set any custom routings for this exchange (which we could when creating it).

The publish command also accepts properties. It has to be valid JSON for configuring the message. Setting delivery_mode to 2 as the command does is to make the message persistent. The command should run respond with “message published”.

Now restart the RabbitMQ instance in Docker. Then try getting the message with the following command:

| rabbitmqadmin get queue=sample2 |

It should return the message we published. Notice that it is the queue that we specified this time to consume the message. If the message was not persistent, it won’t be returned. Likewise, if the queue was not durable, it won’t be available after the server restarts.

Note that running any of these commands while the server is restarting will fail.

Code

The obvious and most common way you will interact with RabbitMQ is through your codebase. With code, you can interact with RabbitMQ in any programming language of your choice. We will use JavaScript (NodeJS) in this article to set up a durable queue.

Run the following commands to initialize a new NodeJS project and install RabbitMQ. Install NodeJS from here if you don’t have it installed.

| mkdir testdurable && cd testdurable npm i –save amqplib |

Create an index.js file inside the newly created project folder. Paste the following code inside and run it. The code connects to RabbitMQ, creates a durable exchange and queue, binds them, and produces a persistent message.

| const amqp = require(‘amqplib’); (async () => { try { const connection = await amqp.connect(‘amqp://localhost’); const channel = await connection.createChannel(); await channel.assertExchange(‘sample3’, ‘direct’, { durable: true }); await channel.assertQueue(‘sample3’, { durable: true }); await channel.bindQueue(‘sample3’, ‘sample3’); channel.sendToQueue( ‘sample3’, Buffer.from(‘Hello World!’), { persistent: true } ); process.exit(0); } catch (error) { console.error(error); } })(); |

We used sample3 for exchange and queue names. You can change them to what you like. Run the file with the following command:

| node index.js |

It should run and exit immediately. Restart the RabbitMQ instance inside Docker. Then go to the RabbitMQ dashboard. Notice the exchange and queue. Try to get the message and it should come up.

Memphis stations. Durable by nature.

Memphis.dev is a next-generation message broker. A simple, robust, and durable cloud-native message broker wrapped with an entire ecosystem that enables fast and reliable development of next-generation queue-based apps. Memphis.dev is built in Go and offers clients libs for popular programming languages like Go, Java, JavaScript, .NET, REST, and Python.

The equivalent concept to a queue in Memphis.dev is a station. In RabbitMQ, we have exchanges and then queues. An exchange routes messages to appropriate queues, and from the queue itself, the consumer can then consume messages.

Ordering and delivery

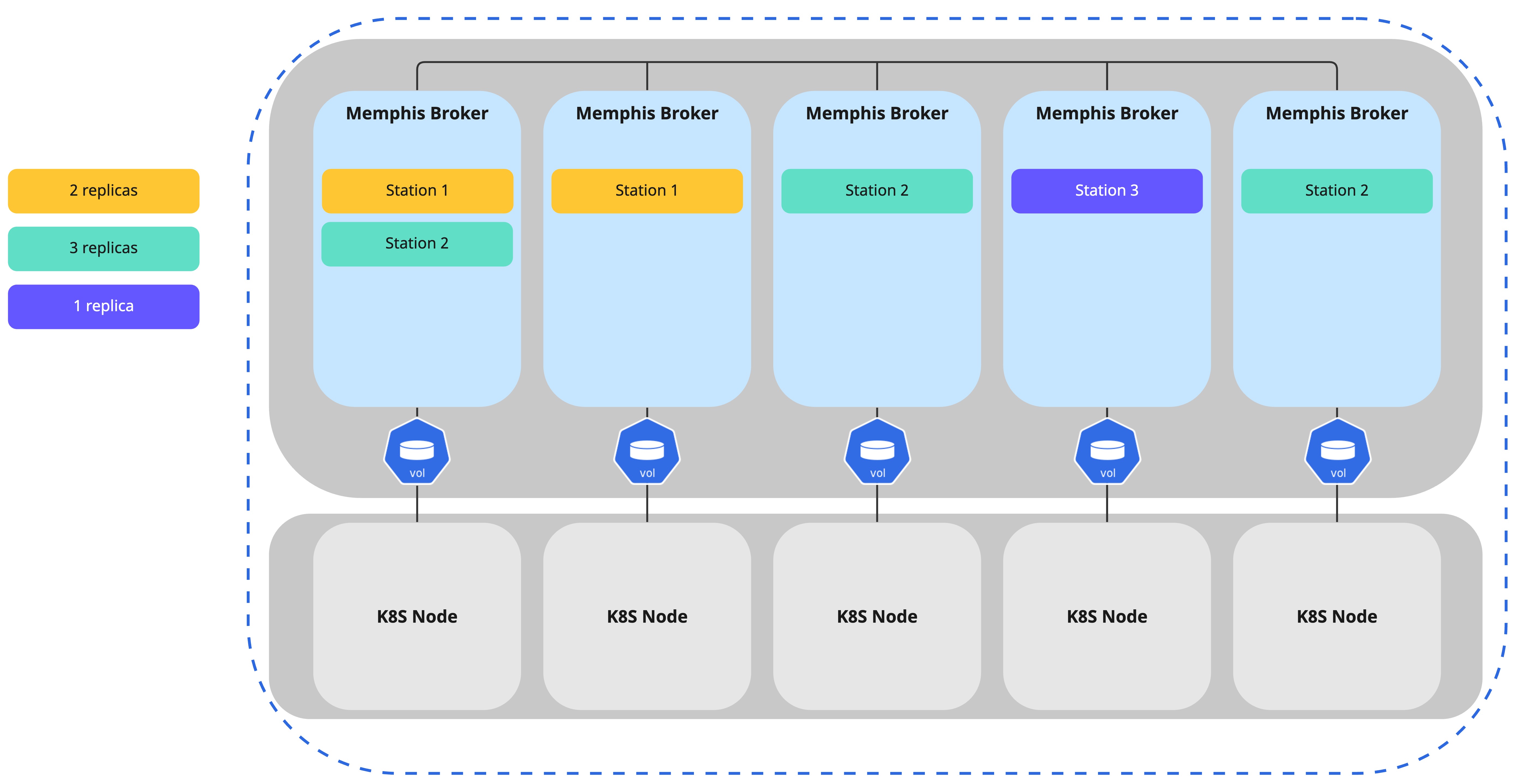

As seen in the illustration below, each consumer group will receive all the messages stored within the station.