Comparing Webhooks and Event Consumption: A Comparative Analysis

Contents

Contents

Introduction

In event-driven architecture and API integration, two vital concepts stand out: webhooks and event consumption. Both are mechanisms used to facilitate communication between different applications or services. Yet, they differ significantly in their approaches and functionalities, and by the end of this article, you will learn why consuming events can be a much more robust option than serving them using a webhook.

The foundational premise of the article assumes you function as a platform that wants or already delivers internal events to your clients through webhooks.

Webhooks

Webhooks are user-defined HTTP callbacks triggered by specific events on a service. They enable real-time communication between systems by notifying other applications when a particular event occurs. Essentially, webhooks eliminate the need to do manual polling or checking for updates, allowing for a more efficient, event-driven, and responsive system.

Key Features of Webhooks:

- Event-driven: Webhooks are event-driven and are only triggered when a specified event occurs. For example, a webhook can notify an application when a new user signs up or when an order is placed.

- Outbound Requests: They use HTTP POST requests to send data payloads to a predefined URL the receiving application provides.

- Asynchronous Nature: Webhooks operate asynchronously, allowing the sending and receiving systems to continue their processes independently.

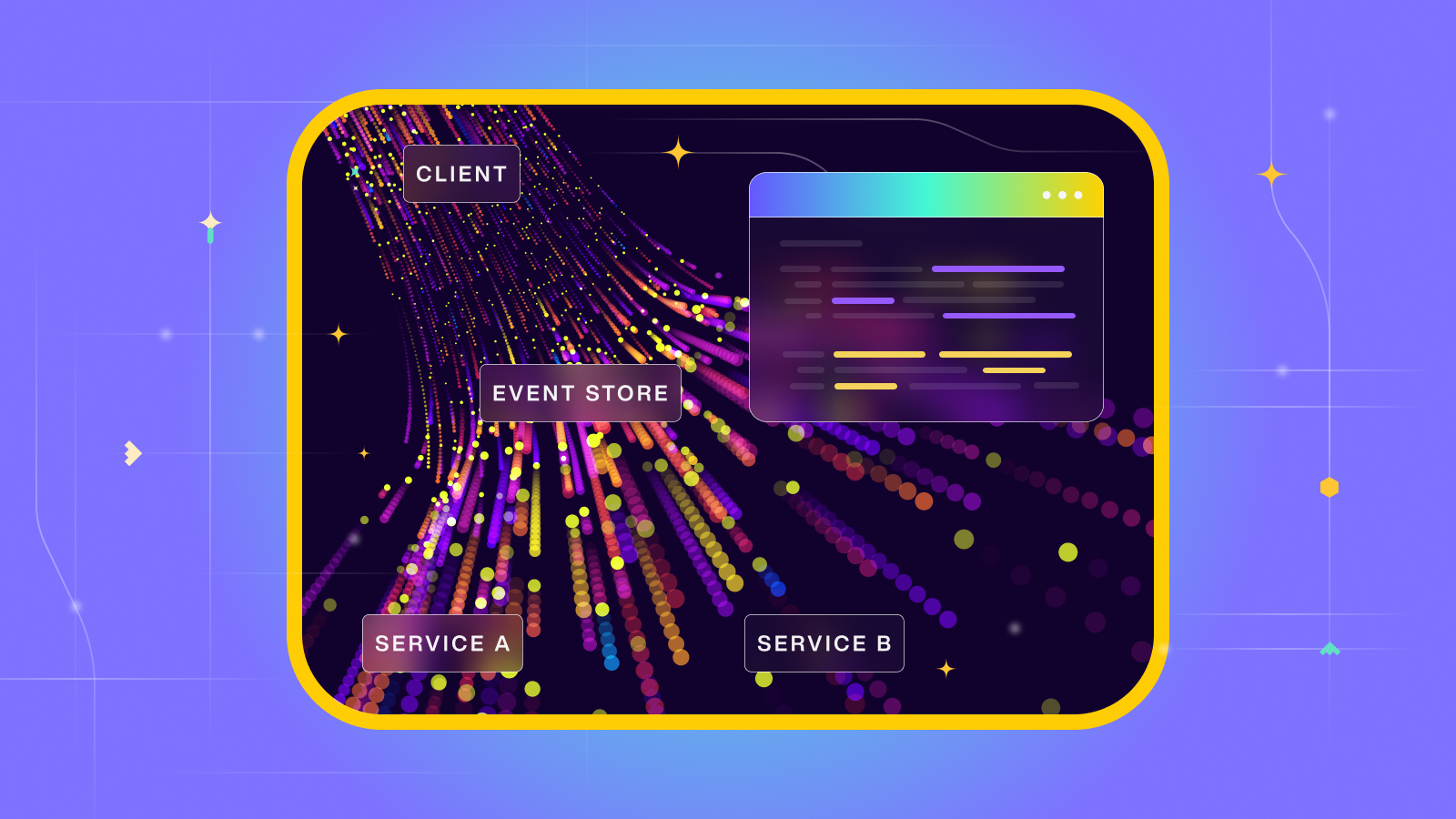

Event Consumption

Event consumption involves receiving, processing, and acting upon events emitted by various systems or services. This mechanism facilitates the seamless integration and synchronization of data across different applications.

Key Features of Event Consumption:

- Message Queues or Brokers: Event consumption often involves utilizing message brokers like Memphis.dev, Kafka, RabbitMQ, or AWS SQS to manage and distribute events.

- Subscriber-Driven: Unlike webhooks, event consumption relies on subscribers who listen to event streams and process incoming events.

- Scalability: Event consumption systems are highly scalable, efficiently handling large volumes of events.

Architectural questions for a better decision

- Push-based VS. Pull based:

Webhooks deliver or push events to your clients’ services, requiring them to handle the resulting back pressure. While understandable, this approach can impede your customers’ progress. Using a scalable message broker to support consumption can alleviate this burden for your clients. How? By allowing clients to pull events based on their availability. - Implementing a server vs. Implementing a broker SDK:

For your client’s services to receive webhooks, they need a server that listens to incoming events. This involves managing CORS, middleware, opening ports, and securing network access, which adds extra load to their service by increasing overall memory consumption.

Opting for pull-based consumption eliminates most of these requirements. With pull-based consumption, as the traffic is egress (outgoing) rather than ingress (incoming), there’s no need to set up a server, open ports, or handle CORS. Instead, the client’s service initiates the communication, significantly reducing complexity. - Retry:

Services experience frequent crashes or unavailability due to various reasons. While some triggered webhooks might lead to insignificant events, others can result in critical issues, such as incomplete datasets where orders fail to be documented in CRM or new shipping instructions are not being processed. Hence, having a robust retry mechanism becomes crucial. This can be achieved by incorporating a retry mechanism within the webhook system or introducing an endpoint within the service.

In contrast, when utilizing a message broker, events are acknowledged only after processing. Although implementing a retry mechanism is necessary in most cases, it’s typically more straightforward and native than handling retries with webhooks. - Persistent:

Standard webhook systems generally lack event persistence for future audits and replays, a capability inherently provided by persisted message brokers.

- Replay:

Similarly, it boils down to the user or developer experience you aim to provide. While setting up an endpoint for users to retrieve past events is feasible, it demands meticulous handling, intricate business logic, an extra database, and increased complexity for the client. In contrast, using a message broker supporting this feature condenses the process to just a line or two of code, significantly reducing complexity.

- Throttling:

Throttling is a technique used in computing and networking to control data flow, requests, or operations to prevent overwhelming a system or service. It limits the rate or quantity of incoming or outgoing data, recommendations, or actions. The primary challenge lies not in implementing throttling but in managing distinct access levels for various customers. Consider having an enterprise client with notably higher throughput needs compared to others. To accommodate this, you’d require a multi-tenant webhook system tailored to support diverse demands or opt for a message broker or streaming platform designed to handle such differential requirements.

Memphis as a tailor-made solution for the task

| Feature | Memphis |

| Message Consumption Model | Memphis.dev operates as a pull-based streaming platform where clients actively pull and consume data from the broker. |

| Protocols and SDK | Protocols: NATS, Kafka, and REST. SDKs: Go, Python, Node.js, NestJS, Typescript, .NET, Java, Rust |

| Retry | Memphis provides a built-in retry system that maintains client states and offsets, even during disconnections. This configurable mechanism resends unacknowledged events until they’re acknowledged or until the maximum number of retries is reached. |

| Persistent | Memphis ensures message persistence by assigning a retention policy to each topic and message. |

| Replay | The client has the flexibility to rotate the active offset, enabling easy access to read and replay any past event that complies with the retention policy and is still stored. |

| Throttling | Effortlessly enable throttling on a per-customer, per-tenant, or specific connection basis. This feature is entirely managed via REST API and can be configured either through monitoring systems or your billing system based on the client’s subscription plan. |

| Multi-tenancy | A pivotal feature of Memphis is the seamless segregation of distinct customers into entirely separate storage and compute environments, providing a robust solution for compliance and preventing interference from neighboring activities. |

| Dead-letter | Dead-letter stations, also referred to as “Dead-letter queues” in some messaging systems, serve as both a concept and a practical solution essential for client debugging. They enable you to effectively isolate and “recycle” unconsumed messages, rather than discarding them, to diagnose why their processing was unsuccessful. |

| Notifications | Memphis goes beyond by handling the creation of a notifications mechanism. It empowers your clients by directly sending Slack/email notifications for unconsumed events and various other scenarios, easing their workflow. |

| Cost | Predictive and efficient volume-based pricing based on the total estimated monthly amount of data. That’s it. There are no hidden charges, and you always know exactly what will be your cost at any given growth period. Pricing |

We are still iterating on the subject, so if you have any thoughts or ideas, I would love to learn from them: idan@memphis.dev